|

Your initial response to this question is undoubtedly, “Of course they do!” And your answer would have been formulated through a series of brain functions responsible for logical reasoning skills. The bad news is that logical reasoning skills have no place in the response to this question, at least not according to a recent article and report dated August 19, 2009, by U.S. News & World Report entitled, “America’s Best Colleges 2010.” The report is replete with names of institutions of higher learning which we equate with true excellence in education, names like Harvard, Princeton, the Massachusetts Institute of Technology, and the California Institute of Technology taking the top positions. But was it through a process of logical evaluation and reasoning that these leaders of education once again placed in the Top Ten? Hardly. How they got to the top of the list will surely surprise you. And here it is in a nutshell as taken from the article itself:

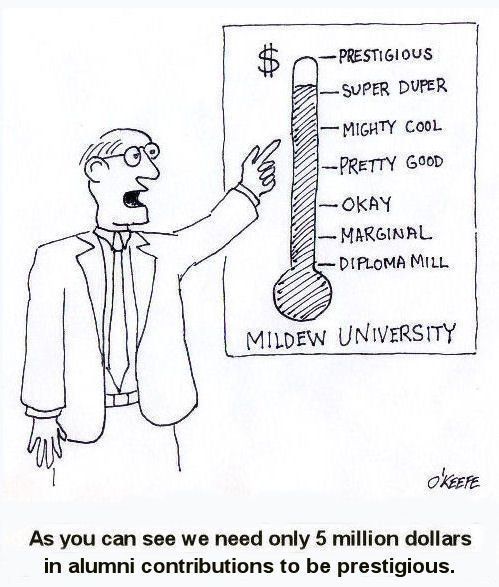

So what are the factors used for assessment of America’s top colleges? The first stated criteria is the number graduating within six years. Now call me old fashioned, but I thought a Bachelor’s Degree was traditionally to be earned within four years? And how these graduates perform in the real world is also anyone’s guess because no assessment is offered. This is followed by “retention rates,” meaning the number of students who remain with the institution after one year. If marriages were measured by this yardstick we’d be in real trouble. Then the criteria gets really interesting. Interesting in that its sole focus seems to be on the institution’s financial resources: “…faculty resources, financial resources, student selectivity, and alumni giving account for 75 percent of a college’s score.” “Student selectivity” meaning that institution’s method for selecting its student body. And in case you missed it the first time around, here’s the cherry on top of this massive pile of whipped cream: “The other 25 percent is based on a peer assessment survey…” Hmm, educators assessing other educators. Isn’t that akin to letting the other teenagers in the subdivision decide whether your teen is towing the line within your household or not? Rankings are wonderful tools, if they are based on an objective, unbiased assessment. When it comes to objectively assessing engineers one organization stands head and shoulders above the rest, the National Council of Examiners for Engineering and Surveying (NCEES). It is the same entity responsible for the licensing of Professional Engineers. Here’s what they have to say about their assessment process and objectivity as found through this link: http://www.ncees.org/asd.php

Which assessment process would you trust to hold up your cherry? _________________________________________________________________ au89kp3it2 |

Tags: education, engineering schools, licensed professional engineer, National Council of Examiners for Engineering and Surveying, NCEES, PE, problems with education, quality of education